Beyond the Prompt: How to Architect Reliable AI SEO Agents for Enterprise Growth

The Fallacy of the ‘Magic Prompt’

In the current landscape of AI-driven marketing, social media is flooded with “magic prompts”—single-block instructions promising comprehensive SEO audits. However, for experienced practitioners, these are little more than digital coin flips. A single prompt lacks the tools to verify data, the memory to ensure consistency, and the verification layer to prevent hallucinations. When an AI is asked to find SEO issues without a structured system, it often imagines what a site should look like based on training data rather than analyzing what the site actually is.

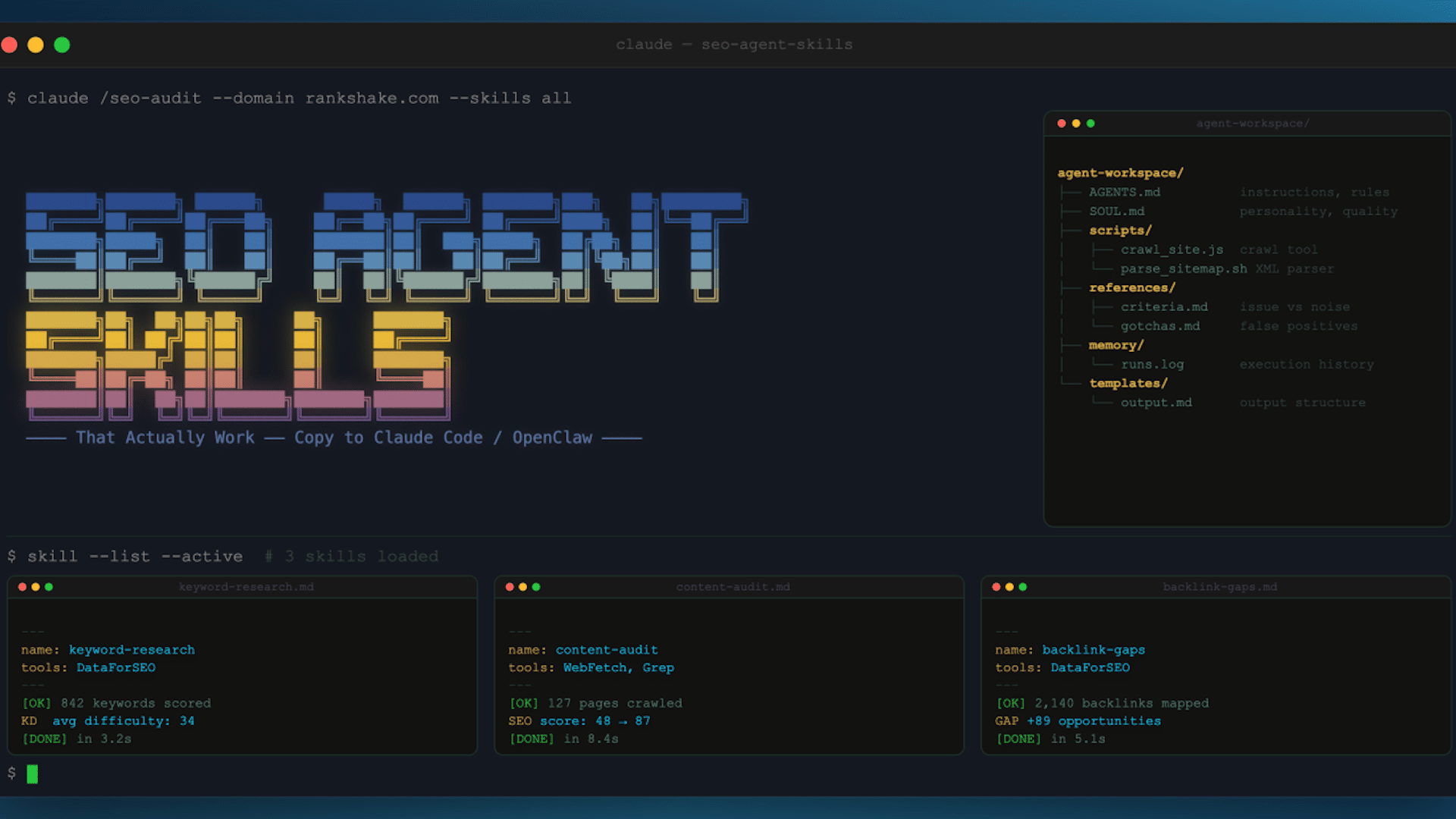

The Workspace Architecture: Building a Digital Employee

To transition from a simple prompt to a reliable SEO agent, you must build a Workspace. Rather than a single file, a professional agent requires a structured environment that mimics a human employee’s desk, equipped with instructions, tools, and institutional knowledge.

The Essential Workspace Components:

- AGENTS.md (The Instruction Manual): Detailed methodology and step-by-step workflows. Instead of “crawl the site,” it specifies the sequence: sitemap check → robots.txt analysis → crawl-delay respect.

- SOUL.md (The Quality Bar): Defines the agent’s personality, core principles, and the standard of excellence required for every output.

- Scripts/ (The Toolbelt): Pre-written, reliable code (e.g., Playwright-based crawlers, XML sitemap parsers). This ensures the agent uses a proven tool rather than inventing a new, potentially broken command every time.

- References/ (The Judgment Center): Documents like

criteria.mdandgotchas.mdthat help the agent distinguish between a critical error and noise. - Memory/ (Institutional Knowledge): Execution logs that allow the agent to compare current results with previous runs, enabling trend analysis.

- Templates/ (The Consistency Guard): Rigid output schemas that ensure the 14th audit looks exactly like the first.

Case Study: The Evolution of a Reliable Crawler

Reliability is built through iterative failure. A high-performing crawler typically evolves through five distinct stages of maturity:

- The Naive Phase: Using raw HTTP requests (curl), which results in immediate blocking by modern CDNs.

- The Scripted Phase: Implementing headless browsers (Playwright) to bypass basic blocks, though often crashing on large-scale sites.

- The Optimized Phase: Adding rate-limiting, throttling, and checkpointing to allow for crashes and resumes.

- The Rendering Phase: Introducing full JavaScript rendering to handle Single Page Applications (SPAs) built with React or Next.js.

- The Professional Phase: Integrating templates and memory for stable, repeatable, and comparative reporting.

The Critical Layer: The Reviewer Agent

The most significant quality leap occurs not when the worker agent is improved, but when a Reviewer Agent is introduced. In a professional agentic workflow, the Reviewer is the first thing built. Its sole purpose is to audit the workers by asking:

- Does the evidence actually support the claim?

- Is the severity rating appropriate for the actual business impact?

- Are there duplicate findings across different specialist agents?

By decoupling execution from verification, agencies can achieve near-perfect accuracy rates (up to 99.6%), ensuring that every recommendation is something the agency would stake its reputation on.

The Validation Standard: The ‘Four Tests’

To ensure AI output is developer-ready and client-worthy, every finding must pass these four benchmarks:

- The Google Engineer Test: Would a search engine expert agree that this is a legitimate issue?

- The Developer Test: Is the instruction specific enough for a developer to fix without asking follow-up questions?

- The Agency Reputation Test: Would I be embarrassed to defend this finding in a meeting with a technical CMO?

- The Implementation Test: Is it an actionable fix (e.g., “compress this specific 3.4MB video”) rather than a vague suggestion (“improve page speed”)?

Conclusion: Architecture Over Intelligence

The secret to successful AI SEO isn’t finding a smarter LLM; it’s building a smarter architecture. By shifting from “prompt engineering” to “workspace engineering,” SEOs can turn unpredictable AI outputs into a scalable, repeatable production line of high-value technical insights.