The Invisible Barrier: How Managed WordPress Hosting May Be Silently Blocking AI Search Visibility

Introduction: The Mystery of the Disappearing Citations

For many digital marketers and SEO professionals, the current priority is Generative Engine Optimization (GEO) and AI Engine Optimization (AEO). The goal is simple: ensure your brand is cited by AI models like ChatGPT, Claude, and Perplexity. However, a critical and invisible technical barrier has emerged. Even when your Google Search Console and traditional SEO metrics look flawless, your content may be completely invisible to the very AI agents you are trying to attract.

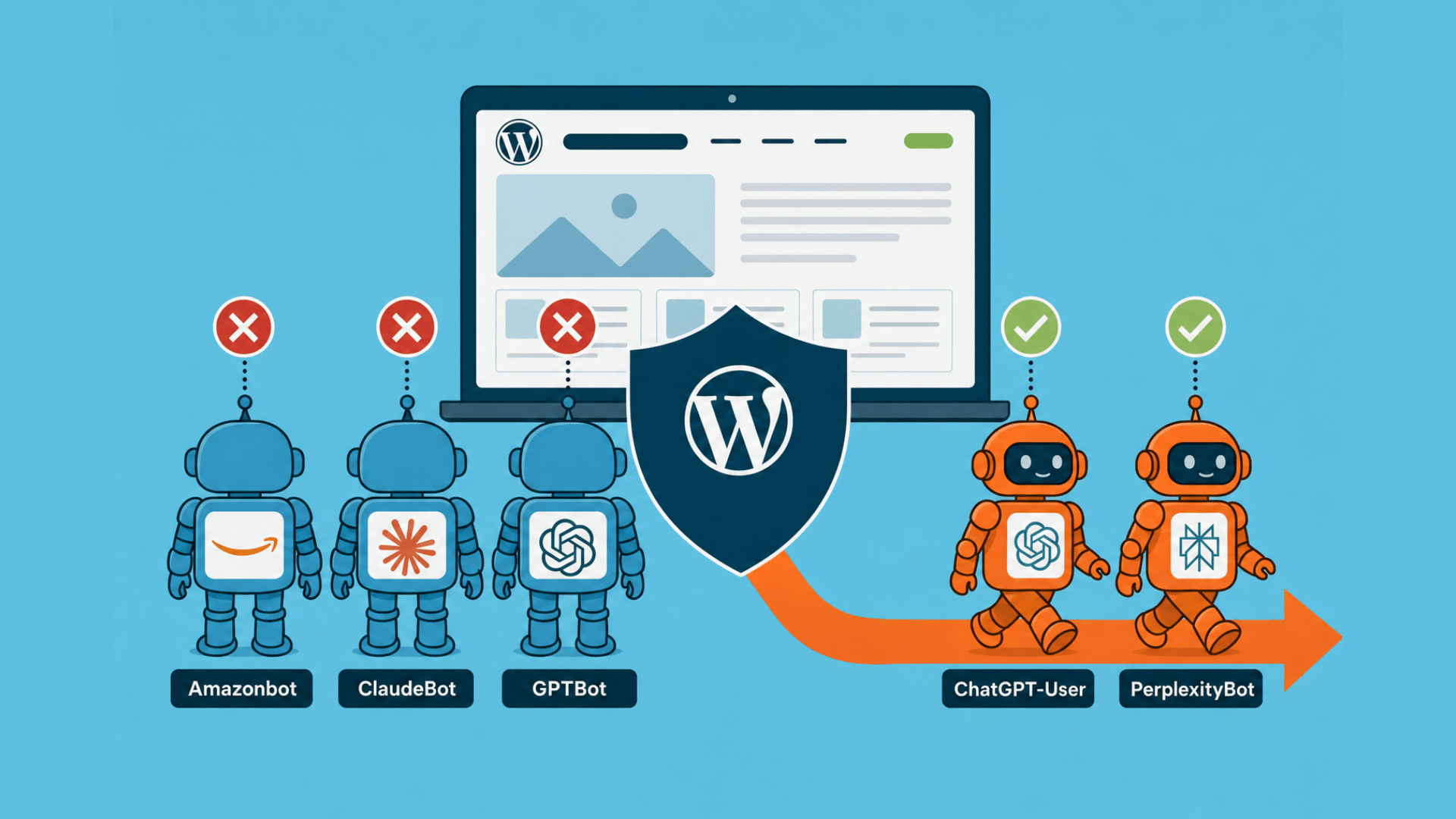

Recent investigations reveal that some managed WordPress hosting environments are implementing platform-level blocks on AI crawlers. Because these blocks happen at the infrastructure layer—above the WordPress application and often behind the customer’s own security layers—they remain undetected by standard SEO audits and security plugins.

The Diagnostic Evidence: A Tale of Two Crawlers

The discrepancy becomes evident when comparing platform-specific citation presence. In a recent case study on searchinfluence.com, Google AI Mode showed a 37.8% citation presence, while Claude and Meta AI sat at 0.0%. Since content quality remains constant across all platforms, the only variable is access.

Analyzing Cloudflare logs provided the first clue. Data showed a high volume of HTTP 429 (“Too Many Requests”) errors specifically targeting AI bots. Interestingly, the blocks were not random: training crawlers (which pull massive amounts of data in bursts) were throttled, while user-facing crawlers (which fire human-paced requests during a live query) were often permitted. This suggests a systemic effort by hosting providers to protect server performance from high-impact AI bots.

Unmasking the Culprit: Why Your WAF Won’t Find It

Most site owners first look at their Web Application Firewall (WAF) or security plugins like Wordfence or Solid Security. However, these tools operate at the WordPress application layer. If the block is firing at the platform edge—the infrastructure managed by the host—the request never even reaches the plugin, leaving the logs empty.

Through a series of reproduction tests using curl, it was discovered that requests using the ClaudeBot or GPTBot user-agents were consistently met with 429 errors, while standard browser agents received a 200 OK response. The smoking gun was found in the response headers: x-powered-by: WP Engine. The hosting platform itself was acting as the gatekeeper, bypassing customer-defined rules and robots.txt directives.

The Impact: Optimizing the Ceiling Without a Floor

This phenomenon creates a dangerous scenario for businesses. Companies are spending thousands of dollars on content updates, schema markup, and llms.txt files to improve AI visibility. However, if the platform-level block is active, these efforts are futile. You are essentially optimizing the “ceiling” of your visibility while the “floor” (crawl access) has been removed.

The correlation is stark: where bots have 100% access, citations appear at meaningful rates. Where bots are blocked, citation presence collapses. In the modern search landscape, treating AI access as optional is a strategic mistake akin to ignoring organic search in the early 2000s.

How to Audit Your Own Site for AI Blocks

If you use a managed WordPress host, you can perform a quick three-step audit to see if you are being silently throttled:

- Run a User-Agent Test: Use a command-line tool like

curlto send multiple requests using an AI bot user-agent (e.g., ClaudeBot). If you receive 429 errors while a standard browser agent receives 200s, you have a UA-based block. - Check Response Headers: Look for

x-powered-byorserverheaders to identify if the block is coming from your host’s infrastructure. - Contact Support with Evidence: If a block is found, open a ticket specifically mentioning that you have reproduced the 429 errors via curl and that they are not originating from your own Cloudflare or security plugin settings.

Conclusion: Moving Toward Transparency

While hosting providers argue that these blocks protect server integrity and customer costs, the lack of transparency is the primary issue. For agencies and B2B SaaS companies, AI bots are not “resource drains”—they are the primary audience. The ability to opt-out of platform-level AI mitigation is no longer a luxury; it is a requirement for any brand serious about its presence in the AI-driven search era.